An Analysis of Computer-Based Assessment in the School of Mathematics and Statistics

a. Introduction

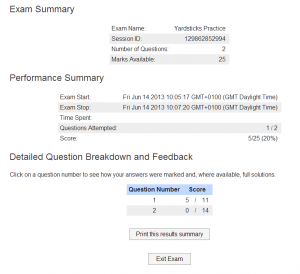

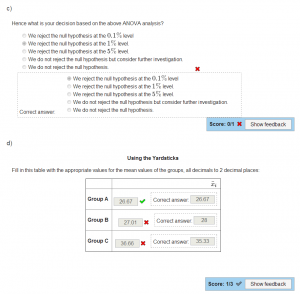

Since 2008 the School of Mathematics and Statistics has incorporated computer-based assessments (CBAs) into its summative, continuous assessment of undergraduate courses, alongside conventional written assignments. These CBAs present mathematical questions, which usually feature equations with randomized coefficients, and then receive and assess a user-input answer, which may be in the form of a numerical or algebraic expression. Feedback in the form of a model solution is then provided to the student.

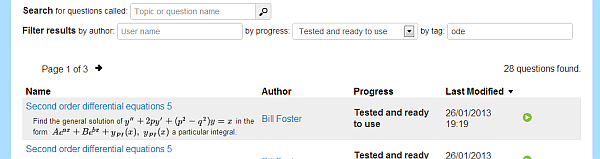

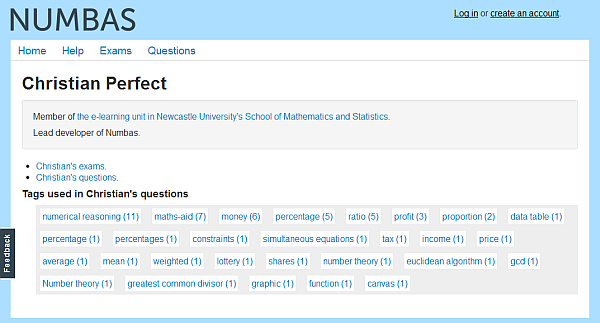

From 2006 until the last academic year (2011/2012), the School employed the commercial i-assess CBA software. However, this year (2012/2013) the School rolled out a CBA package developed in-house, Numbas, to its stage 1 undergraduate cohort. This software offers greater control and flexibility than its predecessor to optimize student learning and assessment. As such, this was an opportune time to gather the first formal student feedback on CBAs within the School. This feedback, gathered from the stage 1 cohort over two consecutive years, would provide insight into the student experience and perception of CBAs, assess the introduction of the new Numbas package, and stimulate ideas for further improving this tool.

After an overview of CBAs in Section b and their role in mathematics pedagogy in Section c, their use in the School of Mathematics and Statistics is summarized in Section d. In Section e the gathering of feedback via questionnaire is outlined and the results presented. In Section f we proceed to analyze the results in terms of learning, student experience, and areas for further improvement. Finally, in Section g, some general conclusions are presented.

b. A Background to CBAs

Box 1: Capabilities of the current generation of mathematical CBA software.

- Questions can be posed with randomized parameters such that each realization of the question is numerically different.

- Model solutions can be presented for each specific set of parameters.

- Algebraic answers can be input by the user (often done via Latex commands), and often supported by a previewer for visual checking

- Judged mathematical entry (JME) is employed to assess the correctness of algebraic answers.

- Questions can be broken into several parts, with a different answer for each part.

- On top of algebraic/numerical answers, more rudimentary multiple-choice, true/false and matching questions are available.

- Automated entry of CBA mark into module mark database.

Computer-based assessment (CBA) is the use of a computer to present an exercise, receive responses from the student, collate outcomes/marks and present feedback [10]. Their use has grown rapidly in recent years, often as part of computer-based learning [3]. Possible question styles include multiple choice and true/false questions, multimedia-based questions, and algebraic and numerical “gap fill” questions. Merits of CBAs are that, once set up, they provides economical and efficient assessment, instant feedback to students, flexibility over location and timing, and impartial marking. But CBAs have many restrictions. Perhaps their over-riding limitation is their lack of intelligence capable of assessing methodology (rather CBAs simply assess a right or wrong answer). Other issues relating to CBAs are the high cost to set-up, difficulty in awarding of method marks, and a requirement for computer literacy [4].

In the early 1990s, CBAs were pioneered in university mathematics education through the CALM [6] and Mathwise computer-based learning projects [7]. At a similar time, commercial CBA software became available, e.g. the Question Mark Designer software [8]. These early platforms featured rudimentary question types such as multiple choice, true/false and input of real number answers. Motivated by the need to assess wider mathematical information, the facility to input and assess algebraic answers emerged by the mid 1990s via computer-algebra packages. First was Maple’s AIM system [5, 14], followed by, e.g. CalMath [8], STACK [12], Maple T. A. [13], WebWork [14], and i-assess [15]. This current generation of mathematics CBA suites share the same technical capabilities, summarized in Box 1.

Read the rest