A long-standing limitation of Numbas has been the inability to offer “error-carried forward” marking: the principle that we should only penalise them once for making an error in an early part. When calculations build on each other, an error in the first part can make all the following answers incorrect, even if the student follows the correct methods from that point onwards.

Numbas now has a system of adaptive marking enabled by the replacement of question variables with the student’s answers to question parts.

Read the rest

Last week I gave a demonstration of Numbas at the CAA Conference in Zeist. As part of the submission process I had to submit a formal paper, which it turns out isn’t being included in the published proceedings because it was on the practice track. Instead, I thought I’d reproduce the paper here, since it offers a good, brief overview of Numbas. If you’d like the PDF version of the paper, click here.

[Could not find the bibliography file(s)Numbas is a free and open-source e-assessment system developed at Newcastle University. This demonstration highlights the key features of Numbas and the design considerations for an e-assessment tool focused on mathematics. Read the rest

As it’s the end of the academic year, I decided to survey our students to get an idea of their attitudes about the Computer-Based Assessments (CBAs) that form part of their courses.

Read the rest

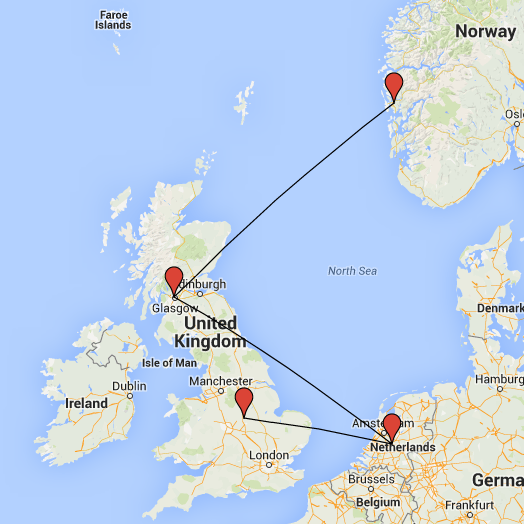

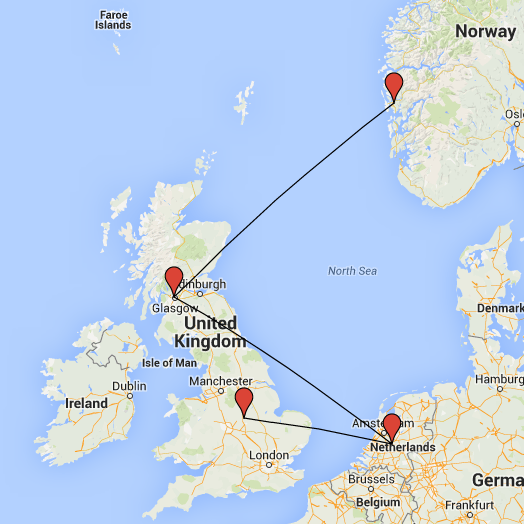

Last week we were in Bergen to give a keynote talk and workshop at the MatRIC Computer Aided Assessment in Mathematics Colloquium. A good time was had by all, despite the rain, and now we’re back in Newcastle preparing for even more talks and workshops. It’s a busy time of year!

On Friday the 12th of June, Bill is giving a talk at the IMA International Conference on Barriers and Enablers to Learning Maths: Enhancing Learning and Teaching for All Learners in Glasgow, titled “Online Practice in Mathematics and Statistics. A model for community collaboration”.

Next, Christian is in the Netherlands for the International Computer Assisted Assessment (CAA) Conference, where he’s giving a demonstration of Numbas on the 22nd of June.

Finally, we’re running a two-day workshop on Numbas at Loughborough University on the 6th and 7th of July, supported by the sigma network. The first day is aimed at introducing new users to Numbas, and the second day will cover more advanced use. The workshop is free to attend, so there’s nothing to stop you!

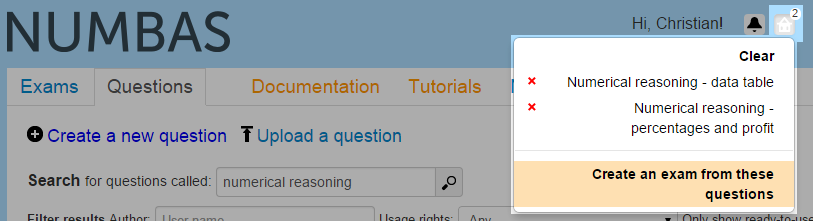

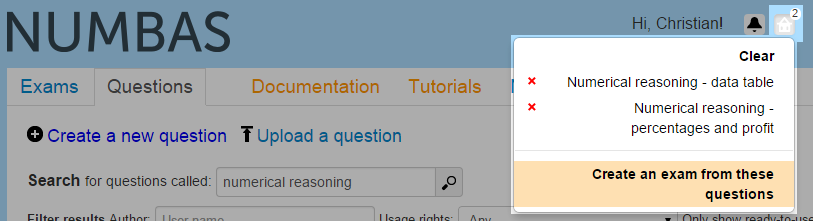

We’ve just updated the Numbas editor with another long-awaited feature: a “shopping basket” to keep questions while you browse the database. You can create an exam containing the questions in your basket with one click, or select individual questions from the basket inside the question editor. This resolves a long-standing problem with the exam editor – when there can be dozens of questions with the same name, and copies of those questions, it was very hard to find the questions you want in the miniature search interface included in the question editor.

I’ve updated the “create an exam” tutorial with instructions on how to use the new “shopping basket” feature.

Read the rest

It’s a good idea to work as part of a team when writing a Numbas question: you can proofread each other’s questions, suggest changes, and give the final OK when a question works. I’ve just added a few new features to the Numbas editor which make collaborating with others a bit easier. Read the rest

We’ve just updated the Numbas editor with a “Licence” field on both questions and exams, which lets you specify the licence under which you allow other people to use your work. To begin with, you can pick from “All rights reserved” or any of the Creative Commons licences.

When you search for questions or exams, you can filter the results by what you’re allowed to do with them – freely reuse, modify, or reuse commercially. If you apply any of these filters, content with no licence specified will be excluded, so please make sure you specify a licence for your material!

Happily, everything produced at Newcastle University and uploaded to the mathcentre Numbas editor is available under a CC BY (free to reuse with attribution) licence.

Note that these licences aren’t enforced by the software – they’re just there to help people do the right thing when compiling open-access resources. If you don’t want your material to be publicly available, the solution is still to use the access controls to make your questions and exams hidden by default.

Since I’m here, I’ll do a general development log as well. Read the rest

We’ve got a pretty big new feature for Numbas this week – a whole new part type! The matrix entry part asks the student to enter a matrix.

In the past, we’ve hacked together questions where we ask the student to enter a matrix by putting a load of gapfills in a table. That was very laborious to set up, and the user experience for the student wasn’t particularly great.

The matrix entry part is meant to make all this a lot smoother – you give a JME matrix variable as the correct answer, and the student is shown a (optionally resizable) grid of cells to enter their answer.

It works like this:

The student picks the size of their answer, then enters numbers in each of the cells. It’s marked as correct if the matrix they give exactly matches the one you give.

If you want, you can lock the size of the answer matrix, so the student doesn’t have to be shown the size picker interface. That looks like this:

There are a few other options:

- You can award the marks proportionate to the number of correct cells, instead of a simple pass/fail.

- You can mark cells as correct if they’re within a given margin of error of the correct answer.

- The precision restriction options from the number entry part are also available here.

- You can allow the student to enter their answers as fractions (this is also available for number entry parts now)

You can see all that in action in this demo exam I created, containing a few simple-ish matrix entry questions. And of course, there’s documentation.

One thing that many people have asked for over the years is the ability to check whether the variables generated by a question have a desired property, and “re-roll” them if not. I’ve been reluctant to implement this because it can lead to sloppy question design when a method that’s guaranteed to work can often be found with a little bit of thinking.

Read the rest

Here’s another round-up of recent development work on Numbas.

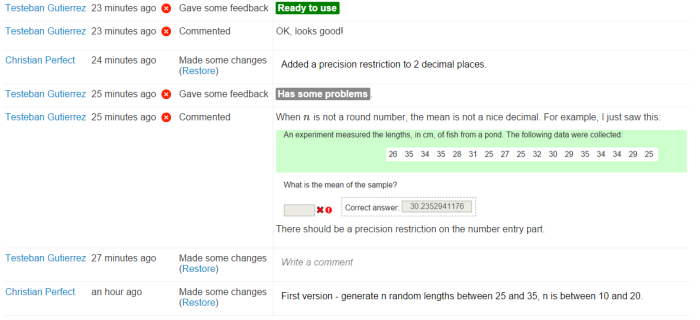

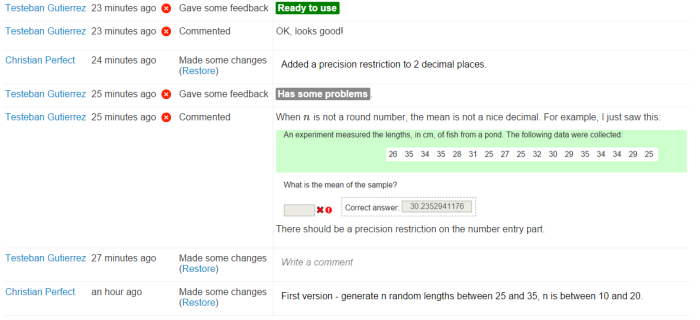

Version tracking

The big new feature is version tracking – every change made to a Numbas question or exam is saved in the database, and you can revert to old versions in the new “Editing history” tab. Read the rest